12 Fun AI Experiments You Can Try at Home

The Simple Pictures Artificial Intelligence Still Can’t Recognize

Earlier this month, Clune discussed these findings with fellow researchers at the Neural Information Processing Systems conference in Montreal. The event brought together some of the brightest thinkers working in artificial intelligence. The reactions sorted into two rough groups. One group—generally older, with more experience in the field—saw how the study made sense. They might’ve predicated a different outcome, but at the same time, they found the results perfectly understandable.

The second group, comprised of people who perhaps hadn’t spent as much time thinking about what makes today’s computer brains tick, were struck by the findings. At least initially, they were surprised these powerful algorithms could be so plainly wrong. Mind you, these were still people publishing papers on neural networks and hanging out at one of the year’s brainiest AI gatherings.

To Clune, the bifurcated response was telling: It suggested a sort of generational shift in the field. A handful of years ago, the people working with AI were building AI. These days, the networks are good enough that researchers are simply taking what’s out there and putting it to work. “In many cases you can take these algorithms off the shelf and have them help you with your problem,” Clune says. “There is an absolute gold rush of people coming in and using them.”

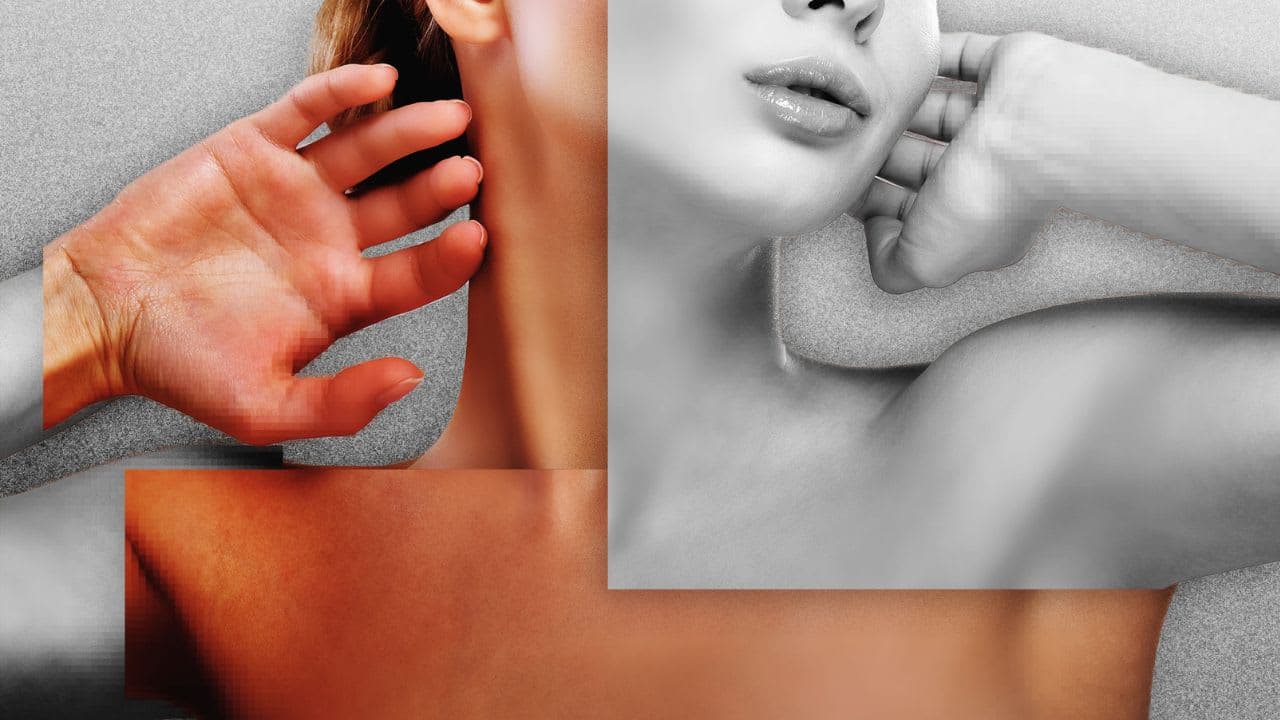

That’s not necessarily a bad thing. But as more stuff is built on top of AI, it will only become more vital to probe it for shortcomings like these. If it really just takes a string of pixels to make an algorithm certain that a photo shows an innocuous furry animal, think how easy it could be to slip pornography undetected through safe search filters. In the short term, Clune hopes the study will spur other researchers to work on algorithms that take images’ global structure into account. In other words, algorithms that make computer vision more like human vision.

But what does “recognize” mean? The two groups of AI researchers described above don’t include AI researchers (e.g., Oren Etzioni) that argues that for a computer to be “intelligent,” it needs to understand what it “sees,” not just identify or classify it. “Recognize” means understanding concepts, not just pattern matching.

Here’s a video clip of Richard Feynman (HT Farnam Street) about why recognizing the difference between knowing the name of something and understanding it is so important for humans.

See that bird? It’s a brown-throated thrush, but in Germany it’s called a halzenfugel, and in Chinese they call it a chung ling and even if you know all those names for it, you still know nothing about the bird. You only know something about people; what they call the bird.

[youtube https://www.youtube.com/watch?v=05WS0WN7zMQ]