Character AI has captured the imagination with its human-like text-generation capabilities. But, is it secure? In this blog, we dive into Character AI, its data safety measures, and the potential risks it poses – privacy concerns, identity misuse, and misinformation spread.

We’ll also dissect its Terms of Service and Privacy Policy, especially in the context of NSFW content. You’ll find answers to critical questions about your conversations, mobile usage, and chat retention. By the end, we’ll provide insights on safely navigating the AI landscape.

Is Character AI Really Safe?

Yes, Character.AI is a safe. The company has taken a number of steps to ensure the safety of its users, including:

- Using SSL encryption to protect user data.

- Having a strict policy against NSFW content.

- Having a team of moderators who review user conversations for any potential violations of the company’s terms of service.

- Giving users the ability to report any suspicious or inappropriate behavior.

Understanding Character.AI

Character.AI is a neural language model chatbot service that can generate human-like text responses and participate in contextual conversation. It was launched in September 2022 by Noam Shazeer and Daniel De Freitas, who were previously involved in the development of Google’s LaMDA language model.

Character.AI allows users to create and interact with fictional characters, such as superheroes, villains, historical figures, or even their own original creations.

Characters can be designed to have their own unique personalities, knowledge, and dialogue styles. Users can also choose to play a role in their conversations with characters, or simply observe as the characters interact with each other.

How does Character AI Ensure Data Safety?

Character AI takes a number of steps to ensure data safety, including:

- SSL encryption: Character AI uses SSL encryption to protect all data transmitted between the user’s device and its servers.

- Data storage: Character AI stores user data in a secure environment.

- Data access: Character AI employees only have access to user data on a need-to-know basis.

- Data retention: Character AI only retains user data for as long as it is necessary for the company to provide its services.

Three Potential Risks Posed by Character.AI

1. Privacy concerns

Character.AI allows users to create virtual characters that resemble real people. This raises concerns about the privacy of the individuals whose likenesses are being used without their consent. For example, someone could create a fake character that looks like a celebrity or politician and use it to spread misinformation or propaganda.

2. Misuse of identity

Character.AI can also be used to create fake social media profiles or impersonate other people. This could be used to commit identity fraud, harass others, or spread misinformation.

3. Spread of misinformation and deception

Character.AI can also be used to generate realistic-looking but fake text and images. This could be used to create deepfakes or other forms of misinformation that are difficult to distinguish from real content. This could be used to manipulate public opinion, damage reputations, or even interfere with elections.

What are Character AI’s Terms of Service and Privacy Policy?

Terms of Service

The ToS covers a wide range of topics, including:

- Eligibility: To use Character AI, you must be at least 13 years old and agree to the ToS.

- Prohibited Content: You are prohibited from using Character AI to generate or share content that is illegal, harmful, or otherwise violates the ToS.

- Intellectual Property: Character AI owns all intellectual property rights in the Character AI platform and its content.

- User Accounts and Passwords: You are responsible for maintaining the confidentiality of your password and account.

Privacy Policy

- Personal information: Character AI collects certain personal information from its users, such as email addresses, IP addresses, and device information.

- Use of personal information: Character AI uses personal information to provide and improve the Character AI service.

- Sharing of personal information: Character AI may share personal information with its affiliates, service providers, and other third parties as necessary to provide and improve the Character AI service.

- Data retention: Character AI retains personal information for as long as necessary to provide the Character AI service and to comply with legal obligations.

About Character.AI and Not Safe For Work (NSFW) Content

Character.AI is an AI chatbot platform that allows users to interact with fictional characters in natural language conversations.

It is currently in beta and does not allow NSFW content. This means that users cannot generate or discuss sexually explicit content on the platform.Character.AI has a policy against NSFW content for a number of reasons.

First, they want to protect their users from inappropriate and harmful content. Second, they want to maintain a safe and welcoming environment for all users. Third, they believe that NSFW content can be disruptive and detract from the overall quality of the platform. You can also check some of the Character.AI Alternatives.

Can Character.AI see your Conversations?

No, Character.AI cannot see your conversations. Your conversations are private and only you can access them. Other users cannot view your chats, and you cannot see theirs. Sharing your chat log is optional through the character or conversation-sharing option.

Is Character.AI Safe for Mobile Use?

Yes, Character.AI is safe for mobile use. It has a mobile-friendly website and a mobile app available on the Google Play Store and the Apple App Store.

Character.AI uses the same security measures on its mobile platforms as it does on its desktop website. This includes SSL encryption and a transparent privacy policy.

Does Character.AI Save Your Chats?

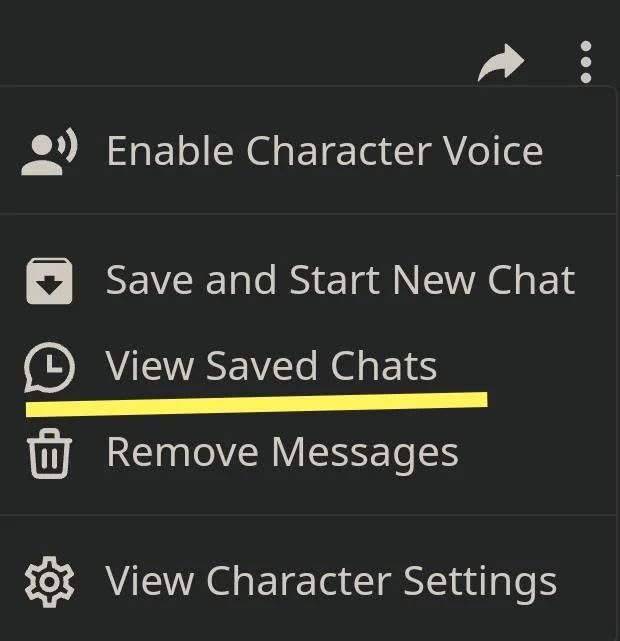

Yes, Character.AI saves your chats. This allows you to pick up conversations where you left off and to review your previous interactions with the characters.

To view your chat history, go to the “Chats” tab in the Character.AI website or app. You will see a list of all the characters you have chatted with, along with the date and time of your last conversation. To view a specific conversation, click on the character’s name.

You can also download your chat history to a file by clicking the “Download” button in the top right corner of the chat window.

Is Character.AI Safe to Log in and Use?

Yes, Character.AI is generally safe to log in and use. It has several security measures in place to protect its users, including SSL encryption to protect user data in transit.

It also provides a transparent privacy policy that explains how user data is collected and used. It has a team of security experts who monitor the platform for threats.

FAQ’s

What content safety measures does Character AI have in place?

Character AI systems implement content safety measures to ensure responsible usage. These include content filters, pre-trained models, user reporting, and real-time monitoring. These measures help prevent the generation of harmful or inappropriate content, making AI-generated text safer for users.

What are potential risks or misuses associated with Character AI?

Potential risks and misuses of Character AI encompass misinformation, hate speech, impersonation, privacy violations, bias, and security vulnerabilities. Users and developers need to be aware of these risks and take steps to mitigate them.

What can users do to use Character AI safely?

Users can enhance the safe use of Character AI by reviewing and editing generated content, enabling content filters, avoiding the sharing of personal information, reporting misuse, and staying informed about the system’s capabilities and limitations.

How does Character AI handle NSFW content?

Character AI systems address Not Safe For Work (NSFW) content by employing content filters, issuing warnings, offering customization options, and allowing users to report such content. While these measures reduce the likelihood of NSFW content, users should still exercise caution and review generated text to ensure its appropriateness.

Conclusion: Is Beta Character AI Safe

The website Beta Character AI is reputable and secure. It is a secure AI chatbot thanks to its advanced security measures, including SSL encryption and transparency in terms of service and privacy policy.

In addition, Character AI users don’t even need to worry about inappropriate or offensive content because the platform already has a default NSFW filter in place to keep it safe.